| Dear Readers: We will be releasing a new episode of our Sabato’s Crystal Ball webinar series next Wednesday morning. It will be posted on our YouTube channel, UVACFP, and we will also send out the direct YouTube link in next Wednesday’s issue of the Crystal Ball. We’ll be discussing the Georgia Senate runoffs, continuing fallout from the election, the 2022 Senate map, and more.

If you have any questions you would like us to answer during the webinar, please email us at [email protected]. — The Editors |

KEY POINTS FROM THIS ARTICLE

— Prior to the election, several prominent political scientists forecast the election in PS: Political Science and Politics.

— In aggregate, the forecasts performed very well.

— However, several individual forecasts missed the mark, and this election showed the importance of questioning the assumptions of models in the midst of an unusual election.

Assessing 2020’s political science forecasts

The October 2020 issue of PS: Political Science and Politics included 10 forecasts of the national popular vote and seven forecasts of the electoral vote by prominent political science forecasters. Some of these forecasts were based on longstanding models while others were novel. Some were based on national data and others on state-level data. Some of the forecasts were originally made several months before the election and others much closer to the election. And some of the forecasts turned out to be quite accurate while others turned out to be far off the mark.

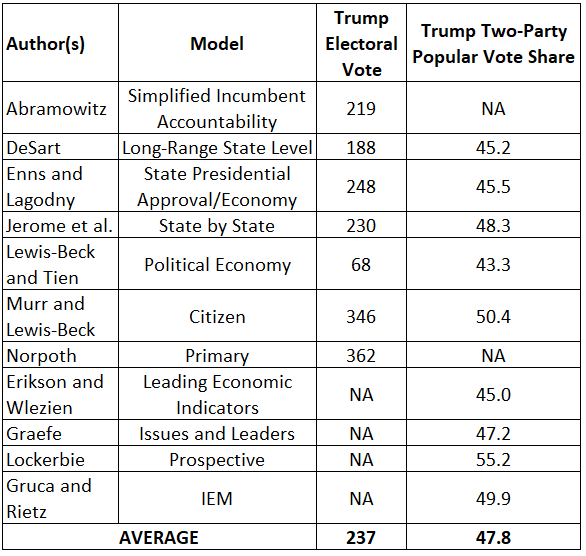

Table 1: Political science forecasts of the 2020 presidential election

Table 1 presents a summary of the forecasts of both the national popular vote and the electoral vote. When averaged and examined as a group, the forecasts came quite close to the actual election results, predicting that President Trump would receive 237 electoral votes and 47.9% of the two-party popular vote. (It now appears that Trump will actually receive 232 electoral votes and very close to 47.8% of the two-party popular vote.)

However, the fact that the averages of these popular and electoral predictions were quite accurate conceals the extremely wide variation in the accuracy of the individual forecasts.

Among the predictions of the electoral vote, the forecasts by Jerome et al., myself, and Enns & Lagodny came closest to the actual result, missing by two, 13 and 16 electoral votes respectively. On the other hand, the forecasts by Murr & Lewis-Beck and Norpoth were far off the mark, overestimating Trump’s total by 114 and 130 electoral votes respectively. And the forecast by Lewis-Beck & Tien was even further farther off the mark in the opposite direction, underestimating Trump’s total by 164 electoral votes. The two different Lewis-Beck models managed to dramatically miss the mark in both directions.

Among the predictions of the popular vote, it is more difficult to rank the accuracy of the forecasters because the precise final popular two-party vote margin remains unknown. Joe Biden’s current lead in the national popular vote is about four points, and that will probably grow slightly as the final results are tallied. The forecasts by Graefe and Jerome et al. were the closest, based on this estimate. On the other hand, two forecasters actually predicted that Trump would win the popular vote — Murr & Lewis-Beck predicted a very narrow Trump margin while Lockerbie predicted a Trump margin of more than 10 points.

What caused some of the political scientists’ forecasts to miss the mark so badly in this election? Partly, it may be the unusual circumstances of an election in which the incumbent president was viewed by a large percentage of voters as not responsible for the severe economic downturn brought on by the coronavirus pandemic. Thus, models incorporating traditional measures of economic conditions overestimated the negative impact of the recession on President Trump’s support. The highly unusual character of the 2020 economic downturn, especially the fact that it was deliberately induced in an effort to control the coronavirus pandemic, was the major reason that I decided to modify my own model and exclude the economy as a factor in forecasting the 2020 outcome.

In addition, however, some of the models seem to be based on questionable assumptions about the behavior of the American electorate. For example, the Murr & Lewis-Beck model is based on the belief that citizens’ expectations of who will win an election can be used to predict the actual result. However, the fact that Trump was seen as the favorite to win a second term until quite close to the date of the election did not mean that voters were actually planning to vote for him. It was probably more a reflection of the fact that voters generally expect an incumbent president to be reelected.

The Norpoth “primary model” produced one of the biggest misses this year. This model is based on the assumption that early primary results can provide an accurate gauge of the ability of candidates to unite their party’s voters in the general election. That may sometimes be true, especially when an incumbent faces a difficult primary challenge, but it clearly was not the case in 2020. Despite his early stumbles in the Democratic primaries, Joe Biden was able to unite Democratic voters behind his candidacy once he had secured the nomination. Moreover, that fact was evident quite early in the election year as public polls consistently showed Biden leading Trump.

Perhaps the lesson of the 2020 election for future forecasts is that forecasting models sometimes need to take into account unusual circumstances affecting a specific election. When a model yields a prediction that appears to be clearly out of line with reality, forecasters should consider modifying or abandoning the model rather than doubling down on it.

Alan I. Abramowitz is the Alben W. Barkley Professor of Political Science at Emory University and a senior columnist with Sabato’s Crystal Ball. His latest book, The Great Alignment: Race, Party Transformation, and the Rise of Donald Trump, was released in 2018 by Yale University Press. |